Algorithmic Idealism: What Should You Believe to Experience Next?

Updated and modified from the peer-reviewed and published version in Found. Phys. 56, 11 (2026). DOI:10.1007/s10701-026-00913-1, arXiv:2412.02826.

Abstract

I argue for an approach to the Foundations of Physics that puts the question in the title center stage, rather than asking “what is the case in the world?”. This approach, algorithmic idealism, attempts to give a mathematically rigorous in-principle-answer to this question both in the usual empirical regime of physics and in some more exotic regimes within cosmology, philosophy, and science fiction (but soon perhaps real) technology. I begin by arguing that quantum theory, in its actual practice and in some interpretations, should be understood as telling an agent what they should expect to observe next (rather than what is the case), and that the difficulty of answering this former question from the usual “external” perspective is at the heart of persistent enigmas such as the Boltzmann brain problem, extended Wigner’s friend scenarios, Parfit’s teletransportation paradox, or our understanding of the simulation hypothesis. Algorithmic idealism is a conceptual framework, based on two postulates that admit several possible mathematical formalizations, cast in the language of algorithmic information theory. Here I give a non-technical description of this view and show how it dissolves the aforementioned enigmas: for example, it claims that you should never bet on being a Boltzmann brain regardless of how many there are, that shutting down computer simulations does not generally terminate its inhabitants, and it predicts the apparent embedding into an objective external world as an approximate description.

Table of Contents

- Prologue: When The World is Not Enough

- Quantum Probabilities as Objective Degrees of Epistemic Justification

- Restriction A: Physics Does Not Always Tell Agents What They Should Believe

- Algorithmic Idealism in a Nutshell

- Algorithmic Information Theory and the Bit Model

- Predictions of Algorithmic idealism

- Example: How to Think About the Simulation Hypothesis

- Conclusions

- Acknowledgments

- References

1. Prologue: When the World is Not Enough

It is the year 2048. You can no longer feel your arms and legs, but you dream of the warm autumn sun breaking through colorful leaves. A blackbird calls from a distance and your daughter smiles at you, a picnic blanket, the shade of the trees. Only the flickering of the ceiling light and the flashing of the surveillance monitors bring you back to reality, and only temporarily, until the warm feeling of the pain medication makes your perception fade.

You are terminally ill. You only have a few days to live, at least that’s what the Doctor says. And yet this realization, in the bright moments between the effects of the morphine and drifting off to sleep, does not fill you with despair: you have taken precautions. Two years ago you signed a contract with AfterMath Ltd.: shortly after your death you will be scanned1This story and most of what follows assumes that agents (at least the ones we mean here, which is ultimately supposed to include humans) can in principle be described classically, and potentially with a finite amount of classical information. This is compatible with the fact that some biological processes are genuinely quantum-mechanical and / or continuous, but a possible intuition is that these details must ultimately be irrelevant for all that matters for us as persons (including conscious experience), since robust functioning seems to forbid dependence on too fine-grained details. Whether this assumption is well-justified is the subject of contemporary debate, with a vast body of literature on e.g. the (un)suitability of “mind uploading”. For a recent proposal against this assumption, claiming that quantum states are at the heart of consciousness, see e.g. G. M. D’Ariano and F. Faggin, Hard Problem and Free Will: An Information-Theoretical Approach, in Scardigli, F. (ed.), Artificial Intelligence Versus Natural Intelligence, Springer, Cham, 2022; arXiv:2012.06580 by a team of specially trained neuroscientists with a particular machine, and your body will be destroyed in the process. A few days later, you will be digitally resurrected in a simulated world on a computer.

You have not made this decision lightly. You have spent years studying philosophy, ethics and neuroscience. In the end, it was thoughts like Bostrom’s fable2N. Bostrom, The Fable of the Dragon-Tyrant, J. Med. Ethics 31, 273-277 (2005); copy on Bostrom’s homepage. and the experimental results of AfterMath that convinced you to go for it. You are pretty sure that you are trying the right thing. And you are hopeful that it will go well.

And yet, you are afraid. You are afraid because you are human. The perception fades, the pain disappears, but the fear and the will to live remain. Your eyes are closed, but you feel the Doctor enter the room. You must have heard his feet scrubbing across the plastic floor of the hospital room, albeit unconsciously.

You clear your throat. At first you can’t get a word out, but then you manage to whisper quietly.

You: “Doctor, I’m so scared…” (coughing) “I know this is not your specialty, but… I need to know! Will I really wake up in the computer simulation?”

You instantly regret having asked, because you know the doctor very well. The doctor is a former physicist, not only a physician, but a physicalist by conviction. He is excellent in his job, but you don’t remember him as particularly empathetic.

Doctor: “Hahaha, you fool! You are asking a non-question! All there is to say is that there is a human being here now, and a computer running a simulation of that thing later. This is all there is to know about the facts of the world.”

The Doctor goes on to refill your infusion. “You’ve paid AfterMath to run that computer simulation, and this is what is going to happen. I don’t even understand what you are actually uncertain about? You know exactly what will be the case in the world!”

| Further resources • Earlier technical paper with all proofs: MPM, Quantum 4, 301 (2020), and a slightly outdated talk video as of 2021. • Recent paper motivating a.id. via “Restriction A” as arising in Wigner’s friend, duplication, ++: C. L. Jones and MPM, arXiv:2402.08727, and slides of a talk at the University of London, 2025. |

While you’re reading: FAQ & clarifications

Q1: Is this an interpretation of quantum mechanics?

A: No. It is a mathematically rigorous, but radical and counterintuitive approach to physical reality more generally. It is strongly motivated by quantum mechanics, but it is a separate theory which aims at making concrete predictions for some scenarios that we do not yet understand.

Q2: What kinds of concrete predictions?

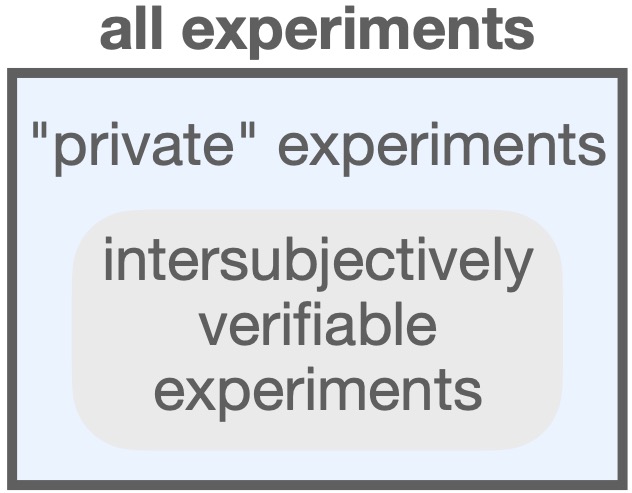

A: Probabilistic predictions for experiments that can be precisely specified, but not necessarily intersubjectively verified. Science is typically concerned with experiments whose results can be seen and analyzed by everybody (the grey area in the picture), and algorithmic idealism’s goal is to extend this regime of applicability to all fundamental physics experiments, including “private” ones.

Science is typically concerned with experiments whose results can be seen and analyzed by everybody (the grey area in the picture), and algorithmic idealism’s goal is to extend this regime of applicability to all fundamental physics experiments, including “private” ones.

Q3: This doesn’t sound like science! What are predictions worth if nobody can check them?

A: The goal is to have a single theory that makes both types of predictions — ones that can be intersubjectively verified, and ones that cannot. If the collectively verifiable “standard” predictions work out fine, then this is evidence for the correctness of the more exotic, private predictions.

2. Quantum Probabilities as Objective Degrees of Epistemic Justification

We usually think that physics is the science of what the world is like. The idea that the contents of our physical theories represent actual things in the world, and that these things have properties that exist objectively and independently of any observer, is a characteristic assumption of many versions of scientific realism3A. Chakravartty, Scientific Realism, The Stanford Encyclopedia of Philosophy (Summer 2017 Edition), Edward N. Zalta (ed.).. And there are good reasons to be a scientific realist: for example, realism allows us to understand that the success of science is not a miracle, but due to the fact that our theories are at least approximately representative of the truth. Intuitively, rejecting realism seems dangerously close to irrationality and pseudoscience4A. Sokal and J. Bricmont, Fashionable Nonsense: Postmodern Intellectuals’ Abuse of Science, New York: Picador, 1999.. And yet, quantum physics challenges5To avoid potential misunderstandings from the outset, algorithmic idealism is not meant to be a new interpretation of quantum theory. It is a theory that aims to make concrete predictions in regimes where they are currently lacking (“private experiments” as discussed in Section 3). In order to do so, it turns out necessary to set standard realist assumptions aside and focus exclusively on the question of the title of this paper. Quantum physics is seen as additional motivation for this focus. The goal is to construct the theory and explore its predictions (some of which are highly counterintuitive), not to have a detailed and philosophically exhaustive discussion of quantum theory, probability, or of all conceptual views that are articulated as motivation for it. at least the most naive versions of realism.

This is evident in the scientific practice of quantum physics. Imagine a particle that has been prepared in a superposition state of two paths in an interferometer. When we measure, we will find the particle in one of the branches, and not in the other. But what is the case before the measurement? A naive stipulation that the particle is in one of the branches, but we do not know which one, leads easily to contradictions with the actual statistics we observe in experiments6In more detail, a contradiction appears if we have a final beamsplitter that leads to perfect destructive interference, and if we naively model the experiment as a probability-1/2 mixture of the two experimental realizations where the particle is deterministically prepared in the upper or in the lower branch, respectively. It is simply a methodological observation that the most straightforward epistemic interpretations tend to fail to explain the experimental results. Making this simple methodological observation formally rigorous is important, but requires substantially more work, e.g. of proving that no noncontextual ontological model reproduces the specific functional form of the quantum uncertainty relations characteristic of the interference experiment, see e.g. L. Catani, M. Leifer, G. Scala, D. Schmid, and R. W. Spekkens, What is Nonclassical about Uncertainty Relations?, Phys. Rev. Lett. 129, 240401 (2022)..

We can certainly write down a wavefunction that describes all there is to say about the particle before the measurement, but as soon as we claim that the wavefunction represents “what is actually the case”, there appears a strange disconnect between the facts that we actually see (the concrete “classical” measurement outcomes) and those before we look (the quantum states). Wavefunctions do not satisfy our basic intuitions about how “facts of the world” should behave. For example, it is provably impossible to observe them directly7W. Wootters and W. Zurek, A Single Quantum Cannot be Cloned, Nature 299, 802–803 (1982).. If we think of the wave functions assigned by some observer as a real property of the physical system that it is supposed to describe, then it would seem to collapse nonlocally and instantaneously upon measurement, in potential tension with relativity (see e.g.8A. Peres, Quantum theory: concepts and methods, Kluwer Acad. Publ., Dordrecht, 2010. for more details)9These observations certainly do not rule out the possibility of realist interpretations of the quantum state. For example, Everettian interpretations evade the need for collapse, at the expense of distinguishing the absolute quantum state of the universe from the branch-relative quantum state assigned by an observer (H. Everett, The Theory of the Universal Wave Function, 1956, in B. S. DeWitt and N. Graham (eds.), The Many-Worlds Interpretation of Quantum Mechanics, Princeton University Press, Princeton, 1973). This is arguably a significant departure from our intuitive view on “facts of the world”. De Broglie-Bohm theory and objective collapse models are further examples of consistent realist interpretations, but whether they can be (or have been already) formulated in a way that avoids conflict with special relativity is subject to ongoing debate (D. Dürr, S. Goldstein, T. Norsen, W. Struyve, and N. Zanghì, Can Bohmian mechanics be made relativistic?, Proc. R. Soc. A 470, 20130699 (2014).; G. Ghirardi and A. Bassi, Collapse Theories, The Stanford Encyclopedia of Philosophy (Fall 2024 Edition), E. N. Zalta & U. Nodelman (eds.))..

Methodologically, this implies that quantum physics does not allow us to rely on our intuitions shaped by naive realism about how things in the world and their properties should behave. Now, the quantum foundations community has rightly pointed out that a lack of intuition, or of imagination, is insufficient to draw any direct metaphysical conclusions. Many phenomena that seem genuinely quantum, such as interference, entanglement, no-cloning, or teleportation, can be reproduced “classically”, i.e. by models based on classical variables that satisfy natural properties such as locality or noncontextuality10R. W. Spekkens, In defense of the epistemic view of quantum states: a toy theory, Phys. Rev. A 75, 032110 (2007); L. Hausmann, A consolidating review of Spekkens’ toy theory, arXiv:2105.03277; L. Catani, M. Leifer, D. Schmid, R. W. Spekkens, Why interference phenomena do not capture the essence of quantum theory, Quantum 7, 1119 (2023).. What is needed are no-go theorems that tell us that it is provably impossible to reproduce a phenomenon classically under such assumptions — but those results exist, and Bell’s theorem11J. S. Bell, On the Einstein Podolsky Rosen paradox, Physics 1, 195 (1964). is the most paradigmatic example of this. It implies that the correlations between spacelike separated events cannot always be explained by a classical common cause, i.e. by a classical hidden-variable model that respects the causal structure of the experimental setup12C. J. Wood and R. W. Spekkens, The lesson of causal discovery algorithms for quantum correlations: Causal explanations of Bell-inequality violations require fine-tuning, New J. Phys. 17, 033002 (2015).. Hence, our realist intuition that the local measurements simply uncover objective, preexisting facts of the world must go wrong, unless locality is violated. In scientific practice, it is then often a methodological move to give up on realist intuitions for quantum systems “in between” measurements.

Acknowledging the limitations of the received realist view becomes actually explanatorily powerful: it allows us, for example, to understand intuitively why “device-independent cryptography” works13J. Barrett, L. Hardy, A. Kent, No signaling and quantum key distribution, Phys. Rev. Lett. 95, 010503 (2005).; R. Colbeck, Quantum and Relativistic Protocols For Secure Multi-Party Computation, PhD Thesis, University of Cambridge, 2006.; S. Pironio, A. Acín, S. Massar, A. Boyer de la Giroday, D. N. Matsukevich, P. Maunz, S. Olmschenk, D. Hayes, L. Luo, T. A. Manning and C. Monroe, Random numbers certified by Bell’s theorem, Nature 464, 1021 (2010).. In this scheme, Alice and Bob perform measurements on two quantum systems that have been prepared in an entangled state, and observe a violation of a Bell inequality on many repetitions. Without any knowledge of the workings of their devices, this allows them in some cases to extract private random bits that are provably unpredictable by any adversary subject to the no-signalling principle. Intuitively, this is because the random bits have not been “facts of the world” before the measurements, and nobody can spy on non-existent bits.

The difficulty of saying what quantum states tell us about the world invites us hence to shift the focus from the standard realist view towards a more empiricist perspective. We can focus on what quantum states definitely do tell us, according to the consensus of the practicing physicists: they tell us what we should expect to observe in an experiment. Given a specification of an experiment which implies a description of the involved quantum states and measurement operators, the Born rule tells us which probabilities to assign to the possible measurement outcomes. In a double-slit experiment, for example, it would be wrong to think that the quantum state tells us through which slit the particle is actually passing; but it tells us what to expect about the place at which we will observe the particle hitting the screen. In other words, Quantum Theory is about what we should believe to observe next.

This methodological diagnosis is compatible with many interpretations of Quantum Theory14This diagnosis is also compatible with QBism, even if the QBists would perhaps not like to phrase it in these terms. They would emphasize that the Born rule is a mere consistency requirement (see e.g. J. B. DeBrota, C. A. Fuchs, and R. Schack, Respecting One’s Fellow: QBism’s Analysis of Wigner’s Friend, Found. Phys. 50, 1859–1874 (2020)). between an agent’s personal assignments of the quantum state, the measurement operator, and the outcome probability. But this implies that any agent that has made up its mind about what quantum state and measurement operator to assign should hold beliefs about outcome probabilities that are given by the Born rule., since they all agree on how to practically use Quantum Theory successfully. But we can go one step further, and claim that this is actually what the quantum state is. This is the content of Berghofer’s degrees of epistemic justification interpretation (DEJI)15P. Berghofer, Quantum Probabilities Are Objective Degrees of Epistemic Justification, arXiv:2410.9175.: “In short, DEJI is an agent-centered interpretation that views quantum mechanics as a single-user theory that allows an experiencing subject to answer the following question: Based on my experiential input, what should I believe to experience next? The input is the wave function and the output is quantum probabilities.”

Berghofer’s interpretation16Berghofer’s interpretation and algorithmic idealism differ significantly: DEJI is an interpretation of quantum theory, and algorithmic idealism (AId) is not. Quantum theory matters for AId in two ways: first, as a motivation to focus on the question of what is observed next; and second, as a “litmus test” to see whether some quantum phenomena are predicted by AId. However, AId is not an interpretation of an existing theory, but a new theory that intends to make novel predictions, as outlined in the next sections. AId benefits from the conceptually rigorous formulation of DEJI, which clarifies its own use of probability substantially. can be understood as a modification of QBism17F. A. Fuchs, Notwithstanding Bohr, the Reasons for QBism, Mind and Matter 15(2), 245-300 (2017); arXiv:1705.03484. (formerly known as Quantum Bayesianism), which views quantum states as subjective degrees of belief. However, given that quantum mechanics is our most successful and fundamental theory of physics, this raises the question of “how objectivity could enter science” in QBism18P. Berghofer, Quantum Probabilities Are Objective Degrees of Epistemic Justification, arXiv:2410.19175.. According to DEJI, quantum probabilities are objective degrees of epistemic justification. In this sense, quantum probabilities tell us something objective about the world: not what is the case in the world, but what we should expect Nature to answer if we ask it a question. In the rest of this paper, we will consider several exotic situations in which agents ask Nature a question. We are not interested in what agents believe, but what type of laws determines what they will actually probably receive as an answer — or, in other words, what they should believe to observe. Hence, Berghofer’s work will be our guiding interpretation when formulating such probabilistic laws.

Q4: Some concrete example predictions?

A: Depending on the concrete mathematical model, algorithmic idealism suggests e.g. an answer to the patient’s question in the story on the left: “will I wake up in the computer simulation?” The answer is probabilistic, and depends on the information-theoretic structure of the patient’s “self” and of the simulation. Algorithmic idealism also says that you should not believe to be a Boltzmann brain, regardless of how many there actually are in the universe, with implications for cosmology. It tells you what to believe under Parfit-like duplication scenarios, or what an Artificial Intelligence should believe when it is copied or duplicated. It says that simulated beings are not in general terminated when their simulation is shut down, for example.

Q5: Even if we are interested in making predictions for these far-out scenarios, why should I trust that this theory predicts correctly?

A: Because it predicts some things that we actually see. For example, in contrast to standard physics which simply assumes this, it predicts that agents will typically make observations that are as if they were embedded into an external world with algorithmically simple, probabilistic laws. Up to perhaps some choices of mathematical details, it is arguably the simplest possible extrapolation of the scientific method from the usual regime to the “far-out” regime.

Q6: In what sense is it an extrapolation of the scientific method to another regime?

A: Among other things, science takes observed data and uses a method of induction to predict future observations. Universal induction is a version of this method that can be mathematically formalized, based on algorithmic information theory. Under versions of the Church-Turing thesis, it makes provably correct physical predictions in the standard regime. Algorithmic idealism can be interpreted as the simple prescription: “Just assume that universal induction would in principle work in all regimes“.

Q7: Idealism is dead. It can never explain why there seems to be a lawlike external physical world.

A: Algorithmic idealism is not actually a form of idealism; it starts with a notion of “self” rather than “world” (hence the name), but does not fit nicely into the philosophical category. For example, it is not related to consciousness directly; most importantly, the existence of an external world governed by simple laws is not a miracle, but a prediction of algorithmic idealism. In this sense, it overcomes the arguably most serious problem of idealism.

3. Restriction A: Physics Does Not Always Tell Agents What They Should Believe

Berghofer’s interpretation is but one example of a broader class of “neo-Bohrian”19M. E. Cuffaro, The Measurement Problem Is a Feature, Not a Bug –Schematising the Observer and the Concept of an Open System on an Informational, or (Neo-)Bohrian, Approach, Entropy 25(10), 1410 (2023). interpretations, which include Healey’s pragmatism20R. Healey, Quantum Theory: a Pragmatist Approach, British J. Philos. Sci. 63(4), 729–771 (2012)., Brukner and Zeilinger’s21Č. Brukner and A. Zeilinger, Information and fundamental elements of the structure of quantum theory, arXiv:quant-ph/0212084. views, Bub’s information-theoretic interpretation22J. Bub, Bananaworld. Quantum Mechanics for Primates, Oxford University Press, Oxford, 2016., Rovelli’s relational interpretation23C. Rovelli, Relational Quantum Mechanics, Int. J. Theor. Phys. 35, 1637 (1996)., and others. The exact differences between these views, and how to best delineate them from other views and each other, are not relevant for the purpose of this paper. The conclusion that I argue to be drawn at this point is simply this: both scientific practice and a class of interpretations (most explicitly, Berghofer’s) suggest that Quantum Theory is best understood as telling an agent what they should believe to experience next.

It is not necessary to agree with any one of these interpretations to share the broadly empiricist sentiment that the question of “What will I see next?” is an epistemically more modest and perhaps more fruitful one than “What is really the case in the world?”. After all, the world might be a much weirder place than we have ever imagined, and humans have a bad track record of guessing the counterintuitive metaphysics of modern physics a priori. Methodologically, it makes sense to concentrate on the requirement that physics should allow us, at the very least, to make successful predictions. This includes predictions that can be intersubjectively verified, but also private ones that are in some sense irreducibly relative to an observer24Physical theories do not directly predict all intersubjectively communicable outcomes of experiments, but only those that are in some precise sense objectively definable; and similarly, algorithmic idealism does not aim for predictions of all outcomes of private experiments. For example, physics allows us to predict probabilistically whether we will hear a detector click, and algorithmic idealism says that we should be able to do the same even if the outcome is private (as in a duplication or Wigner’s friend type experiment). However, neither physics nor algorithmic idealism address directly questions of qualia, say, whether e.g. an agent will observe beauty or joy or be conscious next. We may certainly believe that these supervene on the precisely definable properties, but to understand how exactly this is the case might be so difficult to characterize that it may be a better strategy to consider the study of this question a different field of inquiry..

It may at first sight seem unlikely that there should be any relevant sought-for predictions of this “private” type, but here I will argue that there are in fact plenty, and that some of them are of utmost importance for physics, philosophy, and humanity at large. The question raised by the patient in Section 1 is an archetypical example of this. The answer to the patient’s question “Will I really wake up in the computer simulation?” can only be verified privately, not intersubjectively. That is, any claim of an answer cannot be empirically tested externally; say, by running the scanning and simulation process on a large number of people, and doing statistics about how many times the respective test person has actually woken up in the simulation. The answer to the question is by construction independent of any facts of the world25The problem here is much deeper than the use of indexicals per se. For example, the question of “Where am I?” can be rephrased as asking “Where is this particular patient [insert name and ID number, or some other unique external identifier] currently located?”, i.e. deindexicalized, and an answer can be derived from facts of the world. In Adlam’s terminology (see E. Adlam, Against Self-Location, arXiv:2409.05259), we can always use our beliefs about what is the case in the world to set our superficially self-locating credences, in contrast to pure self-locating credences which are subject to Restriction A. that any third-person view could discover26We can certainly imagine a (contrived) theory that claims, for example, that the patient will experience waking up in the simulation if and only if the temperature in the hospital room was less than 30∘C at the time of scanning. Then learning a third-person fact (the temperature) would tell us the answer to this first-person question, assuming that this theory is true. However, simply learning the temperature and inferring all consequences of this as predicted by our current physical theories, or as predicted by any other future physical theory that makes only intersubjectively verifiable claims, does not tell us anything about how to answer this question.. We can certainly ask the simulated person whether they feel like they have just woken up from the death bed, but the answer will unequivocally be “yes”, regardless of whether this has actually happened, or whether the actual patient has subjectively died and been replaced by a seemingly identical clone with implanted false memories.

A typical physicalist reaction to this question would be to deny that this is a meaningful distinction to be made in the first place: after all, there is no “fact of the world” that could ground the answer this question27The problem is not whether it would actually be possible to scan the human being and simulate it in a functionally equivalent way — as mentioned in the first footnote of this paper, here we always make the working assumption that it is. The point is rather that there is no meaningful question to be asked from a physicalist third-person perspective, as articulated by the Doctor in Section 1: the question “what will probably happen to me?” is not a question about facts of the world, as its answer is independent of any claims about e.g. how matter behaves.. The disadvantage of this reaction is that it robs us of any hope for guidance if we actually face this situation (like you did in Section 1), which might well be the case within the next century or so. Furthermore, as the Boltzmann brain (BB) example below will demonstrate, there are situations in physics where we have encountered questions of a similar type (such as “should I believe that I will make a BB-type observation next?”) whose answers cannot be grounded on facts of the world either (even though it may at first seem that they could).

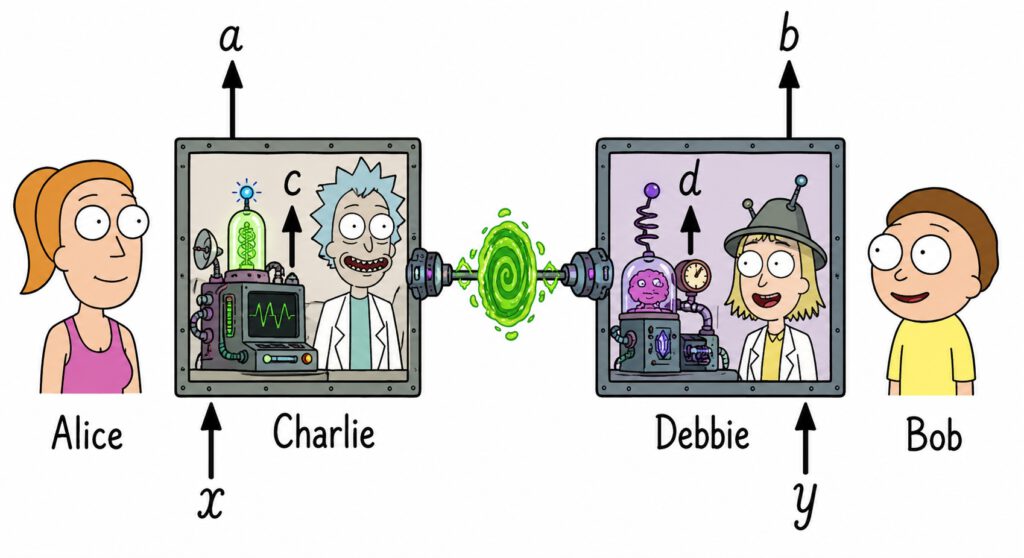

Indeed, recent no-go theorems tell us that we cannot always ground our beliefs about our own future observations on expected objective properties of the world, unless we give up important principles such as locality28The locality assumption of Bong et al. (K.-W. Bong, A. Utreras-Alarcón, F. Gharafi, Y.-C. Liang, N. Tischler, E. G. Cavalcanti, G. J. Pryde, and H. M. Wiseman, A strong no-go theorem on the Wigner’s friend paradox, Nat. Phys. 16, 1199–1205 (2020)) is much weaker than the locality assumption in Bell’s theorem: it does not refer to hidden variables, but expresses no-signalling for actually observed outcomes (“the probability of an observable event is unchanged by conditioning on a space-like-separated free choice z, even if it is already conditioned on other events not in the future light-cone of z.”). This is necessary to avoid conflict with relativity.. Consider the thought experiment sketched in Figure 1 by Bong et al.29K.-W. Bong, A. Utreras-Alarcón, F. Gharafi, Y.-C. Liang, N. Tischler, E. G. Cavalcanti, G. J. Pryde, and H. M. Wiseman, A strong no-go theorem on the Wigner’s friend paradox, Nat. Phys. 16, 1199–1205 (2020)., which is a Wigner’s friend-type30E. P. Wigner, Remarks on the Mind-Body Question, in Symmetries and Reflections, 171–184, Indiana University Press, Bloomington, Indiana, 1967. scenario, but extended to involve four observers and an entangled quantum state. An external observer can repeat the experiment many times, and record the outcomes a and b of the agents Alice and Bob, given their choices of settings x and y. Doing so, this observer may determine a relative frequency estimate of the probabilities \( P(a,b|x,y) \), and compare the result with the quantum predictions. Assuming empirical adequacy of Quantum Theory, i.e. the correct prediction of the outcome probabilities of actually observed events in this scenario, Alice and Bob can use these quantum predictions to determine what they should believe to observe in this experiment: Alice should assign the probability $$ P(a,x)=P(a|x,y)=\sum_b P(a,b|x,y) $$ to seeing outcome a if she chooses setting x (the first equality is due to the no-signalling principle, since Alice and Bob are spacelike separated). A similar calculation applies to Bob. If we only consider Alice and Bob, then this resembles a very familiar story as we could also have told it in the context of, say, classical statistical mechanics: Alice can determine the probabilities she should assign to her own future observations by marginalization of the joint probability distribution over all relevant facts of the world at the moment of observation.

In Quantum Theory, we cannot always assign joint probability distributions to all variables that may, counterfactually, potentially be observed in the future, as Bell’s theorem demonstrates. However, we could have hoped that there is still a “classical” regime of the world where we can talk about variables in the usual sense that are jointly distributed, if we restrict our consideration to variables that are actually observed. Indeed, this is typically the case in our every-day world: all humans share a common Heisenberg cut, and everything that we observe during our lifetimes can (so far) be shared with all other humans. All observations (outcomes, experiences) are part of a large Boolean algebra of propositions, and we can think of a joint probability distribution that corresponds to what any hypothetical external observer should believe about the world. What we ought to believe about our future observations should then be derived from this as a marginal probability distribution, at least in principle (in practice we certainly use heuristic simplifications and shortcuts).

However, this situation will change as soon as we implement extended Wigner’s friend experiments such as the one described above in our world. In this case, the results above tell us that the observations a, b, c, d made by Alice, Bob, Charlie and Debbie, respectively, do not belong to a Boolean algebra of propositions of the type just described. That is, if the externally observed statistics \( P(a,b|x,y) \) violates a so-called local friendliness inequality, then there cannot for all x, y exist a joint probability distribution \( P(a,b,c,d|x,y) \) of the observations of all four observers from which \( P(a,b|x,y) \) derives, unless either the principle of locality or of no-superdeterminism (or both) are violated. But if there is no such distribution, then the four observers cannot all use the “standard methodology” to determine what they should expect to see in the experiment. That is, the four observers cannot start with what they believe about the facts of the world (including \( a \), \( b \), \( c \), \( d \)), and from this deduce their own small part of this (the marginal distribution on \( a \) and \( b \)). Depending on one’s interpretation of Quantum Theory, one might conclude that Charlie and Debbie can individually use the Born rule to obtain probabilities \( P(c) \) and \( P(d) \), but the validity of these assignments can never be verified externally.

In a recent publication32C. L. Jones and M. P. Müller, On the significance of Wigner’s Friend in contexts beyond quantum foundations, arXiv:2402.08727., we have introduced some terminology that aims at describing the essential core of this observation:

Restriction A (informally): Our physical theories do not (and sometimes cannot) give us joint predictions for the future observations obtained by all agents.

More formally, Restriction A is a property of any given theory T (such as Quantum Theory, supplemented by additional assumptions such as locality and no-superdeterminism), and predictions are assumed to be formalized as probability distributions. Restriction A may apply to some scenarios (models, experiments) within T, but not others. It applies to N = 4 observers in the Wigner’s friend scenario above, and it applies to a single observer, i.e. N = 1, in the simulation scenario of Section 1.

The cases of N = 1 and N ≥ 2 have quite different interpretations. For N = 1 agent, Restriction A points at a deficiency of the given physical theory: the agent might want to predict its own future observations, but cannot. For N ≥ 2 agents, Restriction A says that the agent cannot obtain such predictions by predicting properties of the external world that are facts for all agents. We will return later to the question of how to interpret this.

Moreover, suppose that the theory T is intersubjectively empirically complete in the following sense: for all experiments for which the results can be recorded and shared among all external observers (not necessarily among all participants in the experiments, such as Charlie and Debbie), the theory T actually supplies us with a statistical prediction for this experiment. If this is the case, then Restriction A only applies to theory T in scenarios where the agent’s observations cannot be externally recorded; in other words, where there is some sort of “epistemic horizon”33J. Fankhauser, Epistemic Boundaries and Quantum Uncertainty: What Local Observers Can (Not) Predict, Quantum 8, 1518 (2024); J. Fankhauser, T. Gonda, and G. De les Coves, Epistemic Horizons From Deterministic Laws: Lessons From a Nomic Toy Theory, Synthese 205, 136 (2025); J. Szangolies, Epistemic Horizons and the Foundations of Quantum Mechanics, Found. Phys. 48, 1669 (2018). that separates some of the observers from some others. This is indeed the case in Bong et al.’s thought experiment: as we have already seen, it is impossible to record the outcomes a, b, c, d in every single run of the experiment, and to obtain statistics that can, say, be published in a scientific journal. The problem is that asking Charlie or Debbie for their outcomes will in general disturb the experiment, and will prevent the violation of a local friendliness inequality. Similarly, in the simulation scenario of Section 1, it was impossible for any third person (such as the Doctor) to even decide whether the event that the agent was wondering about has actually happened or not in any single implementation. After all, this was the reason for the Doctor to dismiss the patient’s question: a physicalist might want to claim that the third-person facts of the world are all there is to say, and the main characteristic of a “fact” is its intersubjective external accessibility.

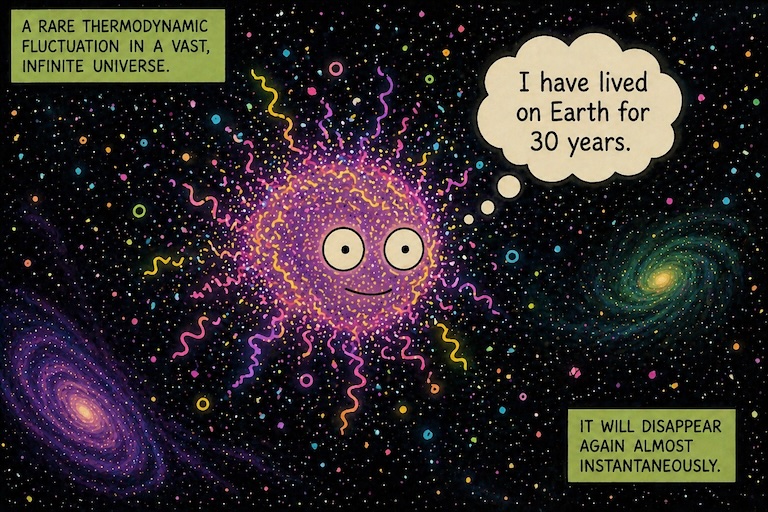

In Ref.34C. L. Jones and M. P. Müller, On the significance of Wigner’s Friend in contexts beyond quantum foundations, arXiv:2402.08727., we have explained in detail why we think that Restriction A is at the core of several other enigmas in the Foundations of Physics and Philosophy. Here I only briefly sketch two of them. The first puzzle is cosmology’s Boltzmann brain problem. For a thorough introduction, I refer the reader to Carroll’s work35S. M. Carroll, Why Boltzmann brains are bad, Current Controversies in Philosoph of Science, Routledge, 7–20 (2020), arXiv:1702.00850.; the following is an extremely brief and cartoonish summary. Suppose that we have a model of the universe that predicts it to be combinatorially large. In this case, thermodynamic fluctuations will produce all kinds of unlikely events in this universe, including Boltzmann brains: local collections of matter that, by mere chance, are functionally equivalent to a human brain, complete with false memories and thoughts such as “I have lived on Earth for 30 years”. Now, what if our cosmological model predicts that there are many more (of the order, say, 10100) Boltzmann brains than “ordinary” brains that have evolved on planets? An intuitive thought is that, in this case, we should expect to be Boltzmann brains. But Boltzmann brains would typically make some very unexpected observations soon (if they don’t disintegrate right away), such as seeing high-temperature radiation instead of the usual night sky. Since this is not what we observe (and we do not seem to disintegrate either), we seem to not be Boltzmann brains36As pointed out by Carroll (S. M. Carroll, Why Boltzmann brains are bad, Current Controversies in Philosoph of Science, Routledge, 7–20 (2020), arXiv:1702.00850), a more thorough and careful argumentation should revolve around the notion of cognitive instability: if a physical theory gives us reasons to believe that we are Boltzmann brains, then this theory undermines all reasons to trust its predictions in the first place.. Does this mean that we have just falsified the corresponding cosmological model?

It is important to note that cosmology, or our current physical theories more generally, only tell us (an estimate of) the number of Boltzmann brains and, perhaps, of ordinary brains, given some cosmological model. But they do not tell us what you should believe if you wonder whether you are one or the other — in particular, which probability you should assign to making an extraordinary Boltzmann-brain-type observation next (conditioned, say, on still being there in the next moment). For example, in order to claim that counting frequencies should give you those probabilities amounts to accepting something along the lines of Elga’s Principle of Indifference37A. Elga, Defeating Dr. Evil with self-locating belief, Philos. Phenomenol. Res. 69(2), 383–396 (2004); download from author’s homepage, which represents a logically independent addition to our physical theories38In C. L. Jones and M. P. Müller, On the significance of Wigner’s Friend in contexts beyond quantum foundations, arXiv:2402.08727, we argue that this principle is not as well-motivated as it might first seem, and that there are other plausible choices too, including ones that would lead to very different conclusions from counting.. Clearly, it would be beneficial for cosmologists to be able to use this reasoning to rule out Boltzmann-brain-dominated models, but Restriction A for N = 1 observer applies: physics does not tell you whether you should believe that you are a Boltzmann brain.

A second class of puzzles where Restriction A applies are situations that resemble Parfit’s teletransportation paradox39D. Parfit, Reasons and persons, Oxford University Press, Oxford (1984).: these are scenarios where a given observer is undergoing some sort of duplication procedure (think of the Star Trek episode “The Enemy Within”). For example, suppose that you will be scanned in all detail (while destroying the original, as in Section 1), and a large number N of copies will be created which will all make different observations in the future. What should you believe about your future experiences in this case? At first sight, it seems like assigning equal probability to every copy is natural, but what would you do if, in fact, an infinite number of copies were created, and with increasing n, the nth copy will more and more deviate from the original, but only ever so slightly from copy n to n + 1? What if the copies are created also at different times? We have no idea what to say in this case, and our physical theories have nothing to say about what you should believe to experience next in this scenario40I do not believe that it is relevant to acknowledge that we will not practically run into a scenario of this form any time soon: our way to respond to those puzzles should in principle apply to all logically conceivable situations; if it does not, this is a sign of incomplete understanding. Furthermore, Everettians in particular might want to acknowledge that this scenario is perhaps not that extraordinary after all.. This is an instance of Restriction A for N = 1 observers. In41C. L. Jones and M. P. Müller, On the significance of Wigner’s Friend in contexts beyond quantum foundations, arXiv:2402.08727., we construct a more elaborate version of a multiplication thought experiment that demonstrates an instance of Restriction A for N = 2 observers, robust to future changes of our physical theories, which resembles a probabilistic version of the Frauchiger-Renner paradox42D. Frauchiger and R. Renner, Quantum theory cannot consistently describe the use of itself, Nat. Commun. 9, 3711 (2018)..

Algorithmic idealism, as described below, can be understood as a reaction to Restriction A in the following sense. In this conceptual framework, Restriction A for any given single agent (N = 1) is seen as a serious problem that needs to be alleviated, because it reveals the inability to predict something about the results of actual experiments that somebody can do. For example, you can in principle (and, in the perhaps not so far future, in practice) agree on being copied into a computer simulation in some sense, and see what happens to you. Despite the fact that the outcome cannot be intersubjectively shared (as explained above, this is a prerequisite for Restriction A to even apply in the first place), it seems absurd to believe that there was nothing that could be said (probabilistically, say, or plausibilistically43T. Fritz and M. Leifer, Plausibility measures on test spaces, arXiv:1505.01151., or in some other sort of mathematical formulation) about which sort of outcome of this experiment you ought to expect to experience. After all, the world will kick back at you and generate some subjective outcome, and something must determine the nature of this outcome. Even claiming that nothing can be said in this case is just an utterance of a human being in need of clarification, which means in need of mathematical formalization. Indeed, algorithmic idealism suggests a particular resolution of Restriction A for N = 1 agents via Postulate 2 below (and via its formalization in some specifically chosen mathematical model).

However, algorithmic idealism does not claim to resolve Restriction A for N ≥ 2 agents, but rather suggests to accept it: according to its predictions, different agents do not in general share a common world into which they seem to be embedded. Instead, “objective reality” is an approximate and emergent statistical phenomenon that does not apply in all cases (see Subsection 6.3 below). Indeed, Restriction A for N ≥ 2 observers (and the recent results in Ref.44K.-W. Bong, A. Utreras-Alarcón, F. Gharafi, Y.-C. Liang, N. Tischler, E. G. Cavalcanti, G. J. Pryde, and H. M. Wiseman, A strong no-go theorem on the Wigner’s friend paradox, Nat. Phys. 16, 1199–1205 (2020). showing that, under some natural assumptions, all future physical theories will suffer from it) is taken as corroborating evidence for the correctness of algorithmic idealism’s strategy to drop the assumption that agents are fundamentally embedded into external worlds.

Q8: I am a realist, and I don’t care about the this sort of nonsense theory.

A: Everybody is free to ignore my approach, but I would invite you to recall why you are a realist in the first place: probably it is your value not to fool yourself and to hold on to the scientific method; probably you want to say that the things science talks about are actually real in some strong sense of the word; and maybe you take a passionate stance against pseudoscience, mysticism and other nonsense. Algorithmic idealism is on board with all of this. It does not claim that the world is “not real”; it simply claims that it is not fundamental. It is a mathematically fully rigorous approach, and one that admits several possibilities of falsification. The goal is not to answer philosophical questions such as “what is the world really made of?”, but to make concrete predictions, albeit for unusual scenarios (see above).

Q9: Aren’t you making unnecessarily speculative claims by dropping most of our intuitive metaphysical assumptions?

A: Being speculative and being counterintuitive are two very different things. It is true that many philosophers regard it as a weakness of a theory if it is counterintuitive, and if it implies “extravagant metaphysics”. I think this is a mistake: all essential progress in physics that we have made in the past has demolished some of our previous intuitive metaphysical assumptions.

Q10: If “having an intuitive metaphysics” is not one of your values, then what are your values?

A: These include mathematical rigor, conceptual clarity, simplicity, and empirical adequacy. Certainly, for a theory to be interesting, it should also do something for us, e.g. make concrete predictions for some scenarios that we are interested in. Beyond that, I do not think that it matters whether the theory happens to be in line with human intuition. Our intuition has evolved to help us survive and reproduce, but not to understand reality beyond its correlation with these goals.

Q11: Algorithmic idealism predicts that the external world is a computer program. But our world is continuous, not discrete! Aren’t you following a naive “digital physics” paradigm?

A: Algorithmic idealism does not claim that the world is discrete in any naive sense. It only models the state of the observer as discrete (think of the data in your observations and memory), but even this is not a necessary feature and can be changed by changing the model. Regarding the external world, the relevant feature is not cellular-automaton-type naive discreteness of spacetime, but a physical version of the Church-Turing thesis: given a description of some experiment, there is an algorithm that computes the probabilities of the possible outcomes. Note that this is compatible with different notions of continuity; for example, many continuous mathematical structures (including those described by differential equations) admit finite mathematical definitions. A.id. predicts that the external world is essentially a computational process, but it does not claim that it resembles — intrinsically for its “inhabitants” — in any way the computational models that we humans have introduced, such as Turing machines or cellular automata.

Q12: But the world is quantum, not classically probabilistic! However, all I see in your formalism are statistical probabilistic statements, not quantum states.

A: The empirical predictions of quantum theory are all about probabilities of outcomes of measurements (or recordings or experiences), and this exhausts everything that we have ever seen in any experiment. These Born rule probabilities behave in unexpected, “nonclassical” ways, e.g. they violate Bell inequalities in a particular causal structure (the Bell scenario) where bare classical probability theory predicts no such violations. But they are still probabilities. In particular, as shown in my 2020 paper, a quantum computation is a valid “computational ontological model” according to my definitions, and hence a possible external world into which an agent may find itself embedded. An external quantum world is perfectly compatible with algorithmic idealism.

Q13: Still, you could have built in the formalism of quantum theory right from the start! Why have you not done it?

A: Because my hope is that some nonclassical aspects of quantum theory can be shown to be predictions of algorithmic idealism. (Or conversely, if it turns out that completely classical phenomenology is typically predicted for agents, then this is an opportunity of falsification of a.id.). In my 2020 paper, I have indeed given a preliminary result (a valid theorem, but “preliminary” because it still rests on too many assumptions) which shows that the violation of Bell inequalities, but preservation of the no-signalling principle can sometimes be expected to result from a.id. Intuitively, this is because “resetting an agent’s self state resets its external world”, leading to statistical consequences (akin of “cosmological detection loopholes” etc.) that are counterintuitive and indeed impossible in a classical and standard ontology.

Q14: What is algorithmic idealism’s relation to QBism and to the many-worlds interpretation?

A: I have the highest respect for people who work on both of these interpretations and its variants (including other neo-Copenhagen interpretations), and am deeply interested in both. If I were a realist, I would subscribe to the many-worlds interpretation. However, the whole point of algorithmic idealism is not to be a realist in the traditional sense, because traditional realism is an in-principle-obstacle for even attempting to come up with a rigorous theory that addresses the questions that algorithmic idealism targets (recall Section 1). But I believe that no experiment we will ever do will be in contradiction with many worlds, and I am technically strongly interested in it, because its burden to derive the Born rule for branching observers has some overlap with a.id.’s burden to define transition probabilities between self states.

As for QBism, I have a deep affection for its views, and certainly agree with its tenet that a quantum state should best be viewed as a “catalog of probabilities”, and that these are probabilities of an agent’s future experiences. However, I believe that there is both room for and necessity of a notion of probabilities that is closer to our idea of “objective chances” rather than “personal beliefs”. (To clarify: I believe that both notions can peacefully coexist within the same formalism.)

Q15: So, then, what is your interpretation of quantum theory?

A: You see some answers in the main text. I find Berghofer’s modification of QBism appealing, useful, and to large parts in line with how I think about the quantum state. In particular, I think that physics deals with probabilities that do not say what you believe, but what you should believe.

But more importantly, I am simply not interested in interpreting quantum theory if we understand the word “interpretation” in a traditional sense: as telling a convenient story about “what is really going on in the world”. I do not believe that the notions of “world” and “really going on” have any foundational significance. I am however very much interested in the interpretation project if it is understood more broadly: as understanding what quantum theory teaches us about the nature of reality. Proposing algorithmic idealism is (among other things) a contribution to this project.

Q16: Can algorithmic idealism be tested?

A: In some specific sense yes, but there is a lot to be said here. What most people will have in mind is a sort of “gold standard” of testing: a theory makes a new and unexpected prediction, experiments are built that test the prediction, and the prediction is confirmed. But complying with this gold standard has become increasingly difficult in fundamental physics, and perhaps impossible, over the last couple of decades. For example, nothing like this has ever been possible for any candidate theory of quantum gravity. Indeed, it is even notoriously difficult to show that a given theory of quantum gravity reduces to general relativity on large scales, which would be an excellent first test.

Similarly, a first test of a.id. is whether it reproduces our standard view of physics to good approximation: for example, the view that we find ourselves in an external world that evolves according to some simple, probabilistic laws; or the general fact that these probabilities seem to be behave nonclassically (and ultimately quantumly). The former is already a proven theorem, and the latter is work in progress (with some preliminary results). There are many other ways in which a.id. could fail to reproduce things that we see; for example, it could predict large fluctuations of the “laws of nature” which we do not observe, and be ruled out on that ground.

A second way to test a.id. is by checking whether it admits a consistent treatment of the kinds of conceptual puzzles that it targets, such as the Boltzmann brain problem. It might fail to do so and lead to similar kinds of “cognitive instability” as pointed out by Carroll; but, as explained in the main text, things look good on this front (but further testing is required).

A third way of testing follows from recalling what types of predictions a.id. actually targets: experiments that can be privately, but not intersubjectively performed and analyzed. Hence, one can in principle test a.id. by private experiments. This may involve science fiction scenarios such as the simulation problem of Section 1. But it also includes another experiment that we will all perform one day, and very privately so: death. Some models of a.id. predict that we should not think of death as transitioning into a kind of “eternal unconscious state”, but rather as “falling out of the universe” in some sense. Due to the “no-erasure problem”, a.id. does not currently make any concrete claims about this. But if a model is developed that does, then it can in principle be tested privately in this way (though you might forget on the way what you are actually testing).

4. Algorithmic Idealism in a Nutshell

How do we respond to Restriction A? In the case of N ≥ 2 agents, the nonexistence of a joint probability distribution for the observations of all agents can be interpreted45It certainly does not have to be interpreted in this way (e.g., one might drop some background assumptions such as locality that underly the corresponding no-go theorems), but it is a well-motivated and natural possibility. as evidence that we should not always regard observations or events as absolute (see e.g.46F. A. Fuchs, Notwithstanding Bohr, the Reasons for QBism, Mind and Matter 15(2), 245-300 (2017).; J. B. DeBrota, C. A. Fuchs, and R. Schack, Respecting One’s Fellow: QBism’s Analysis of Wigner’s Friend, Found. Phys. 50, 1859–1874 (2020).; H. Zwirn, Are Events Absolute?, arXiv:2507.14672.), and algorithmic idealism as described below will agree with this. The case of Restriction A for a single agent, N = 1, is however even more troublesome: it means that an agent can perform an experiment where they will learn some outcome, but there is nothing that can be said about what they should expect to observe. As explained further above, I regard this as absurd, and interpret it as a shortcoming of our given theories. If one takes this viewpoint, how does one react to it?

One option is certainly to double down on physicalism, and to insist on saying that “what should I expect to experience next?” is not usually a well-defined question. In this view, we should see this as a kind of pragmatic question that sometimes does not have an answer, and that will often have a vague answer, similarly as the question “Are viruses alive?”

However, here I suggest to explore a second option, which is to assume that (a specific version of) this first-person question does always have an objective answer, and to work out a theory that aims at supplying this answer at least in principle. The motivation to do so is two-fold: pragmatically, we will at some point be in desperate need of guidance in exotic situations such as the one of Section 1, and a precise theory that offers such guidance and that is also in agreement with the standard physics predictions in mundane situations seems to be a good tool to aim for. Furthermore, as I have argued in Section 2, Quantum Theory suggests that the question of “what should I expect to observe next?” is a more fundamental, natural and fruitful question to ask than “what is the case in the world”. We may see this as a hint from modern physics that we can hope to get closer to the truth by attempting to answer the former rather than the latter, and we can hope that trying to do so may indirectly tell us something interesting about the world, too.

The goal of algorithmic idealism is to implement this second option. Despite its name, I am aiming for a formal, mathematically rigorous approach for which higher-level concepts such as consciousness or belief do not play any fundamental role.

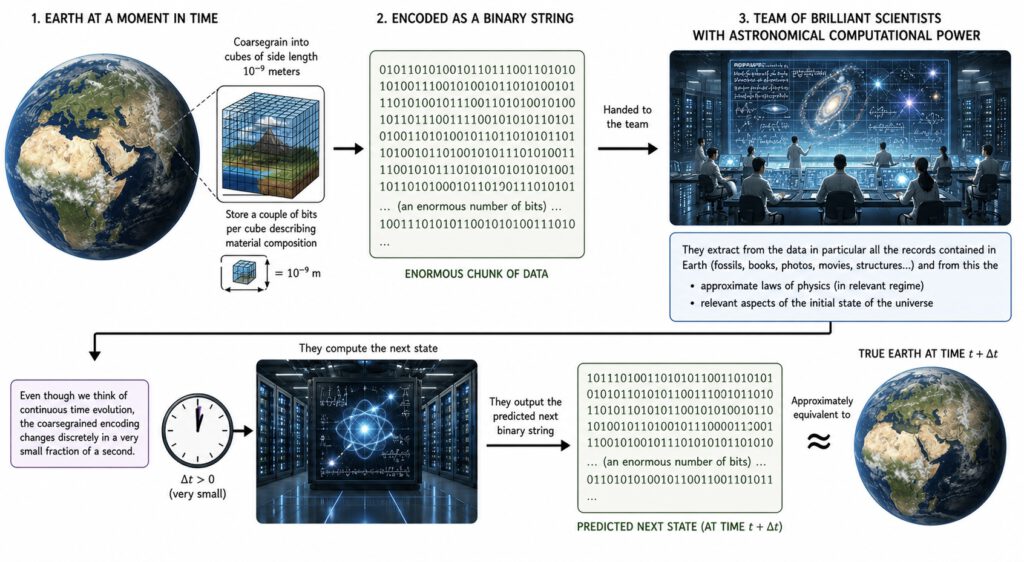

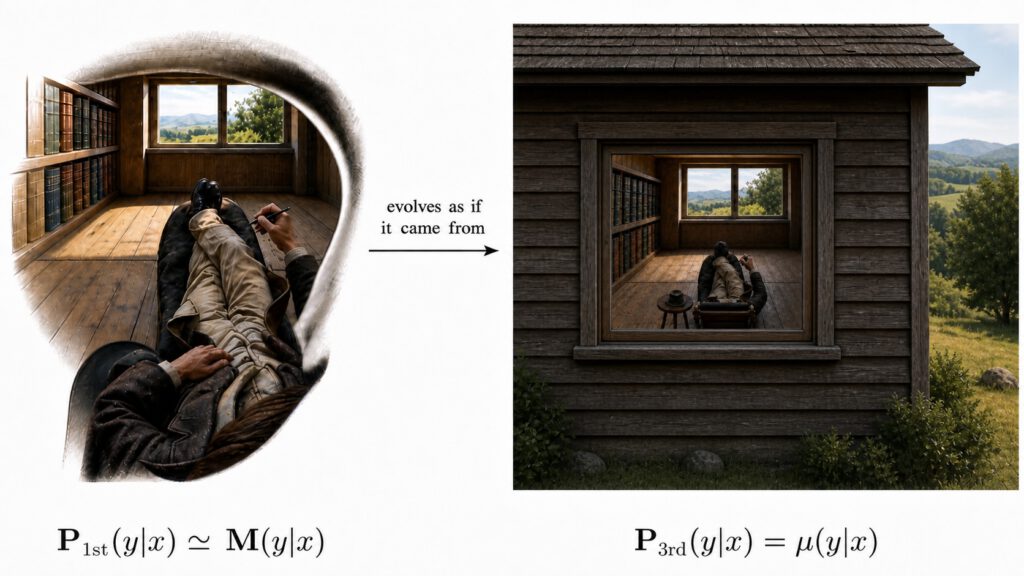

A first step is to put aside the concept of “belief” and instead to focus on the current state of the observer (for any given single, subjective moment), in an abstract, information-theoretical sense. For a human observer, for example, this could be the pattern47D. C. Dennett, Real Patterns, J. Philos. 88(1), 27 (1991); link on PhilPapers. of neuronal connections in their brain (this description is only meant to be helpful for our intuition and not to become an actual part of the theory). This corresponds roughly to what Parfit called a “person stage”48D. Parfit, Reasons and persons, Oxford University Press, Oxford (1984).. Among other things, this implies that “agents” or “observers” will not be primitive notions of algorithmic idealism, but only momentary “snapshots” of the pattern, structure or information content that defines an agent or observer at some given moment. These will be called “self states”49In the earlier publication M. P. Müller, Law without law: from observer states to physics via algorithmic information theory, Quantum 4, 301 (2020)., these have been called “observer states”., see Figure 2 for an illustration and further comments.

A second step is to let go of all human-centric aspects and to pursue a completely abstract approach: a self state can be any sort of pattern, in some mathematically, abstractly defined set of all possible patterns. Only a tiny subset of those patterns would deserve to be interpreted as describing something like the momentary state of an “observer” (let alone a human or conscious observer). At the level of fundamentality that we are targeting, it would be completely off the mark to introduce a specific model of an observer, or to make any specific assumptions about its composition or function. The most modest and thorough approach is a maximally abstract one, in which all aspects of how we think of observers or agents are tentatively treated as contingent.

This leads us to a formulation of the first postulate of algorithmic idealism:

Postulate 1 (Self States). There is a set \( \mathcal{S} \) of “self states”, the collection of patterns that one can be at any given subjective moment. Everything that is to be said about a given agent at some moment, including all inferences to be drawn about its past or future, is determined by its self state.

In particular, and perhaps most counterintuitively, algorithmic idealism denies that agents are fundamentally embedded into some external world: an agent at some given moment is completely characterized by its self state (which is a standalone pattern), and not by its spatiotemporal location inside some external world. In cases such as the Boltzmann brain problem, for example, where there are many locally indistinguishable copies of an agent, it rejects the usual “self-locating uncertainty” intuition that the agent is actually one of the copies, but does not know which one. Instead, according to algorithmic idealism, the agent (at that given subjective moment) is its self state, and this abstract pattern just happens to be represented, or realized, in each one of the copies. In some sense, the agent should think of itself as the equivalence class of all these realizations (ordinary brains and Boltzmann brains and all other, potentially completely different, realizations).

At first sight, this seems absurd: after all, will the agent not make another observation soon that will either confirm or disprove its concerns of being a Boltzmann brain, and hence retrospectively say that it was actually the copy on the planet, and not the random fluctuation out there? On second thought, however, the apparent contradiction gets resolved by two insights. First, algorithmic idealism is constructed to avoid the “standard methodology” described in Section 3, and to allow agents to predict what they will see next without grounding this on the expected state of the world into which they are embedded. Hence, by construction, what they should believe cannot depend on whether they are “actually” surrounded by a planet-like environment or high-entropy radiation. Second, as will be explained in Section 6, algorithmic idealism predicts that agents can often think of themselves as “effectively embedded”: what happens to them will often look to them to excellent approximation as if they were a part of some external world with algorithmically simple, probabilistic laws of nature. In the cosmology example, this results in the consequence that the agent will probably experience “planetary business as usual” next, regardless of the number of Boltzmann brains, even though the question of whether it actually is a Boltzmann brain or not is ill-defined. This will be discussed in more detail in Section 6 below.

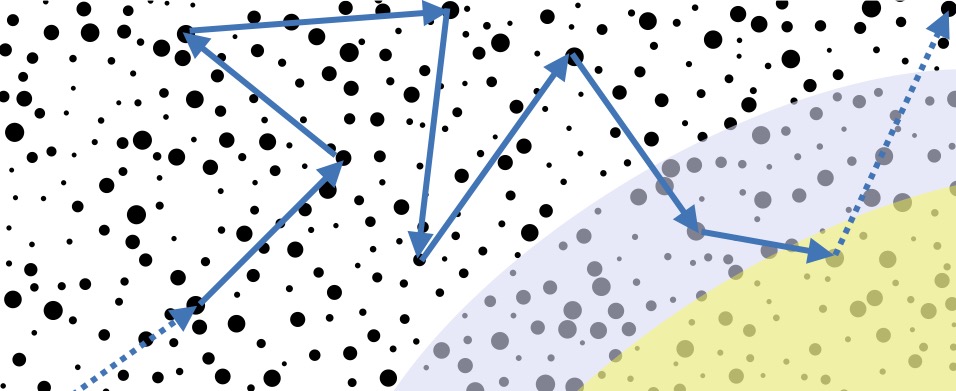

Dropping the standard methodology, we now need a postulate that implies what an agent51I have remarked above that the notion of “agent” is not a primitive of algorithmic idealism, but only the notion of a “momentary snapshot” in terms of a self state. However, it will still be linguistically convenient to use the words “observer” or “agent” in the colloquial descriptions, simply because it would otherwise be difficult to convey the right intuitions for us human beings who are used to think in terms of things that persist over time. should believe to experience next, and since we are not grounding this on an external world in the usual sense, the answer can only depend on the agent’s momentary self state. Since we have decided to do without vague terms such as “belief” or “experience” in algorithmic idealism, this question has to be replaced by another one: If an agent is in self state \( x \) now, in which self state \( y \) will it probably be next? By construction, we cannot rely on our usual laws of physics to ground this question. Instead, a fundamental idea of algorithmic idealism is to rely on a weaker assumption that underlies our trust in empirical science in the first place: that induction is possible. More specifically, I implement this idea in the form of the following postulate:

Postulate 2 (State Change). If you are in self state \( x \) now, you will be in another self state \( y \) next, and which one this will be manifests itself as a random experiment for you. The likelihood of some \( y \) next, given \( x \) now, has an objective value, which expresses what you should believe will happen to you next, if you knew your current self state. The mathematical structure that defines its value formalizes a universal method \( M \) of induction.

Induction, broadly speaking, is a method to predict future observations by extrapolating regularities of past observations. What I mean by “universal” is a method that does not only work under the assumptions that we navigate within our own universe, but in a large class of conceivable environments. The possibility of inductive inference is one of the main assumptions of the scientific method. Here, we stipulate that induction should in principle always be possible, even in exotic situations, but in the following indirect way: there is a method of induction \( M \) such that the likelihood of becoming \( y \) next, given that one is \( x \) now, is exactly the one that is predicted by \( M \). In other words, a hypothetical third person that uses method \( M \) could correctly (albeit not deterministically) predict any other agent’s future self state, if it were given a complete description of that agent’s current self state. A consequence of Postulate 2 is that regularities that are present in your current self state will tend to persist in your private future, because methods of induction assign high probability to this being true.

Probabilities obtained by some method of induction are naturally interpreted in a Bayesian way: many such methods begin with a certain choice of prior, and apply Bayesian updating upon obtaining new information (this is in particular true of Solomonoff Induction, used in the bit model below). However, here, the mathematical formulation of a method of induction is detached from its epistemic origins: rather than describing someone’s beliefs about an unknown future, the values that it defines mathematically are now interpreted as some sort of objective chance of turning from some given self state to another one. In more detail, they are supposed to be interpreted in a similar way as Berghofer’s DEJI interprets quantum probabilities: not as expressions of what somebody believes, but what they should believe if they were told the current self state52This does not imply that the agent itself (which is in self state \( x \) now) can use method \( M \) to predict its own future experiences directly. After all, the agent will not in general have complete knowledge of \( x \), and some self states will represent observers that are incapable of reasoning, or that are simply patterns which defy any interpretation as “observer” or “agent”. However, agents like us who are able to reason rationally may still use the resulting model of algorithmic idealism to predict something about their future observations. This can be done either by working out general predictions of algorithmic idealism that are independent of the specific form of the current self state \( x \), or by analyzing the consequences of some (incomplete, but non-zero) knowledge of \( x \)..

Postulates 1 and 2 are intentionally formulated in such a way that they admit of several possible rigorous mathematical formulations, or models. For every choice of self states \( \mathcal{S} \) and method of induction \( M \), one obtains a different model. One simple, preliminary model, the bit model, has been introduced in Ref.53M. P. Müller, Law without law: from observer states to physics via algorithmic information theory, Quantum 4, 301 (2020).. It will be described in Section 5 below. Different mathematical models (and the associated methods of induction) may rely on different mathematical definitions of likelihood, e.g. on a single conditional probability distribution, on a collection of several such distributions (which is the case for the bit model below), on a notion of imprecise probability54P. Walley, Statistical Reasoning with Imprecise Probabilities, Monographs on Statistics and Applied Probability, Springer Science and Business Media, 1991., or on plausibility measures55T. Fritz and M. Leifer, Plausibility measures on test spaces, arXiv:1505.01151; N. Friedman and J. Y. Halpern, Plausibility Measures: A User’s Guide, in P. Besnard and S. Hanks (eds.), Proceedings of the Eleventh Conference on Uncertainty in Artificial Intelligence (UAI1995), Morgan Kauffman, 1995.. The precise conceptual interpretation of these “values” of likelihood, as currently sketched in Postulate 2, may have to be adapted on a case-by-case basis. Algorithmic idealism should therefore be seen as a kind of conceptual framework rather than a single theory, because it admits many different mathematical realizations.

As an analogy, consider the general idea that physical space could be curved rather than flat, or more generally, non-Euclidean in some way. This single idea has many possible mathematical realizations that make very different physical predictions, even though some predictions may be universal (such as a violation of the triangle postulate). The question of which one of the resulting theories is correct (if any) is ultimately a matter of empirical observation and experiment. In fact, the idea that non-Euclidean geometry applies to our world predates the theory of relativity. In 1872, the German astrophysicist Karl Friedrich Zöllner56H. Kragh, The first curved-space universe, Astronomy & Geophysics 53(5), 5.13–5.15 (2012). suggested that we may live in a non-Euclidean curved universe. This allowed him to suggest a solution to what is known as Olbers’ paradox: if the universe had positive curvature, it could have finite volume without having a boundary, which would allow us to understand why the night sky is dark. Ultimately, further experimental results, such as the perihelion precession of Mercury’s orbit or the outcome of the Michelson-Morley experiment, were necessary to arrive at yet another modification of the conceptual framework which ultimately resulted in General Relativity: that we should consider curved spacetime rather than space. Nevertheless, it would be fair to say that Zöllner’s idea turned out to be essentially correct: finiteness of a non-Euclidean universe resolves Olbers’ paradox.

In hindsight, while Zöllner should not have hoped to guess the correct theory of the universe a priori, he would have been correct to anticipate the general field of mathematics that would be involved in its formulation: differential geometry, because this is the arena of reasoning that allows us to describe spatial geometries which are locally familiar but potentially globally unusual. We can similarly guess what field of mathematics will be relevant for all formalizations of algorithmic idealism. In the standard picture of an agent or observer, we would say that the observer’s state contains a large amount of memory and experiences of an external world; that is, variables which are correlated with the variables that comprise relevant aspects of the outside universe. This is the paradigmatic scenario described by (standard) information theory57T. M. Cover and J. A. Thomas, Elements of Information Theory, John Wiley & Sons, 2006.: essentially, an observer’s state would correspond to information, and it would be information about an external world. However, algorithmic idealism concerns the structure of the observer (at some given moment) and its regularities (because a method of induction must be applicable) without reference to anything external. We need an area of mathematics that is concerned with the structural regularities in a bare, concrete collection of standalone data, not in a random variable that is potentially correlated with another variable. Such a field exists: algorithmic information theory (AIT)58M. Hutter, Universal Artificial Intelligence – Sequential Decisions Based on Algorithmic Probability, Springer, Berlin, Heidelberg, 2005.; M. Li and P. Vitányi, Kolmogorov Complexity and Its Applications, 3rd Edition, Springer, 2008., and hence the name of the approach.

Q17: So you’re proposing a “theory of everything”? That’s prepostorous — and it puts your work high up on my crackpot index!

A: This is not a theory of everything! It has nothing to say about almost all questions that most physicists are interested in. For example, it will tell you nothing about quantum gravity or particle physics. It is true that its main hypothesis makes a highly unusual foundational claim, offering an explanation for why we see simple laws of physics in the first place. However, it only predicts the algorithmic simplicity and probabilistic nature of these laws, not their specific form. Indeed, by claiming that these are contingent, algorithmic idealism predicts the unavoidability of its own limitations.

5. Algorithmic Information Theory and the Bit Model

AIT is concerned with the information content of individual chunks of data, usually described in terms of the finite binary strings, \( \{0,1\}^*=\{\varepsilon,0,1,00,01,10,11,000,001,\ldots\} \), where \( \varepsilon \) is the empty string, or the integers, \( \mathbb{N}_0=\{0,1,2,3,4,5,\ldots\} \). The most well-known quantity studied in AIT is Kolmogorov complexity: if \( x \) is a binary string or an integer, then \( K(x) \) denotes the length of the shortest program for a universal Turing machine U that produces \( x \) as its output. In the following, let us focus on the binary strings for convenience. Suppose that we generate a string \( x \) of length \( n \) by tossing a fair coin \( n \) times. Then, with high probability, \( K(x)\approx n \), because the shortest computer program to generate \( x \) will typically be “print \( x \)”, i.e. a program that lists all the bits of \( x \). On the other hand, if \( x \) is a string of \( n \) zeros, \( x=\underbrace{000\ldots 0}_n \), then \( K(x)\approx\log n \), because we only have to tell the Turing machine how many times it has to print a zero, which can be done by specifying the approximately \( \log n \) (in base 2) binary digits of \( n \). Similar reasoning applies to the first \( n \) binary digits of \( \pi \), or of \( \exp(\sqrt{2}) \), for instance.

The exact definition of Kolmogorov complexity depends on some choices of the Turing machine model that is used in its construction. For example, Kolmogorov complexity \( K \) defined above is usually defined for so-called prefix-free Turing machines (for which every program needs to describe where it ends), but there is another version called \( C \) for “bare” machines without this requirement. The fact that there are many possible definitions of its basic quantities implies many different possibilities to potentially ground a formalization of algorithmic idealism on AIT.

AIT also allows us to ask, for example, how likely it is that the Turing machine \( U \) generates some given output \( x \), if it is supplied with random input. How this is defined exactly depends on choices about the details of the working of the Turing machine. For prefix-free Turing machines, the textbooks give the definition of algorithmic probability $$m(x):=\sum_{p:U(p)=x} 2^{-\ell(p)},$$ where \( \ell(p) \) denotes the length of the binary string (program) \( p \). This is interpreted as the probability that \( U \) outputs \( x \) on some input that is chosen by a sequence of fair coin tosses. It turns out that $$-\log m(x)=K(x)+\mathcal{O}(1).\tag{1}\label{eq1}$$ This notation means that the difference between \( \log m(x) \) and \( K(x) \) is bounded in absolute value by a global constant that is independent of \( x \). This example already demonstrates some features of the scientific practice of AIT: researchers in this field are typically not interested in the actual values of quantities like \( K(x) \) or \( m(x) \) (in fact, these numbers are typically not computable), but in the scaling of these quantities with respect to other quantities such as the length of \( x \), or the comparison of such quantities59In some sense, this resembles scientific practice in physics, where we sometimes say things like “Suppose that the interaction energy \( \Delta \) is small compared to the total energy \( E \)” etc.. This attitude also eliminates most of the worries arising from the freedom of choice of universal Turing machine \( U \): for any other universal machine \( V \), we would have \( K_U(x)=K_V(x)+\mathcal{O}(1) \), where \( K_U \) denotes Kolmogorov complexity as measured with respect to the machine \( U \). That this sort of curious interplay between exact definition and (sort of) vague comparison-type application leads to a meaningful field of inquiry (and, in fact, to immense mathematical beauty) is a fact that cannot be conveyed in terms of the theory itself, but only be experienced when actually working with AIT.